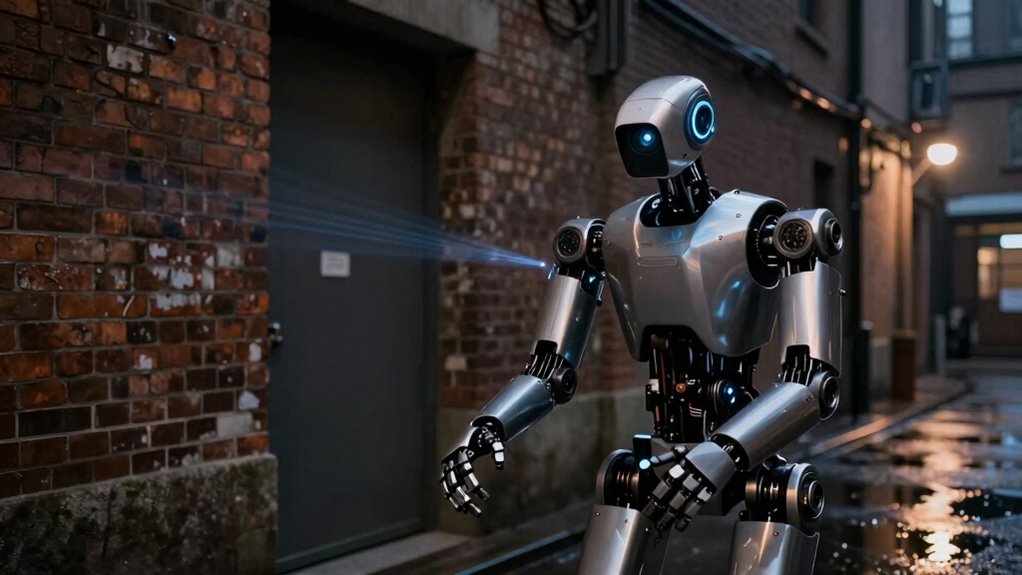

To make camera robots work effectively at night, you should equip them with infrared sensors, LIDAR, or thermal imaging to see in low light. Combining hardware calibration, sensor fusion, and advanced image processing helps enhance clarity and detect objects. Adding active or infrared lighting improves visibility without disturbing surroundings. Proper integration and optimization guarantee reliable night navigation. Keep exploring solutions to adapt your robot for various darkness conditions and improve its nighttime performance.

Key Takeaways

- Incorporate infrared and thermal imaging sensors to detect heat signatures in complete darkness.

- Use sensor fusion techniques combining camera, LIDAR, and infrared data for robust low-light perception.

- Apply image enhancement and noise reduction algorithms to improve visibility in dark conditions.

- Implement hardware calibration and synchronization to ensure accurate sensor data alignment and system stability.

- Utilize adaptive lighting and on-the-fly recalibration to maintain reliable navigation across varying low-light environments.

WWZMDiB 6Pcs IR Infrared Sensor 3-Wire Reflective Photoelectric Module for Arduino

💎【IR Infrared Sensor】:Widely Used Robot obstacle avoidance, obstacle avoidance car, assembly line counting and black and white line…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Why Do Low-Light Conditions Challenge Camera Robots?

Low-light conditions pose a significant challenge for camera robots because limited illumination reduces the quality of the images they capture. When lighting is poor, sensor calibration becomes critical; improperly calibrated sensors struggle to distinguish details, leading to blurry or dark images. Additionally, low light forces robots to rely on increased exposure times, which can cause motion blur. To conserve power, robots often limit their sensor activity, but this hampers image clarity further. Effective sensor calibration ensures the camera interprets low-light scenes accurately, while smart power management helps balance energy use and image quality. Advanced imaging techniques like HDR are also being developed to improve visibility in challenging lighting conditions. Without these measures, robots face difficulty in steering or recognizing objects in dim environments, making low-light conditions a persistent obstacle in robotic vision. Moreover, advancements in high dynamic range (HDR) imaging are helping improve visibility in challenging lighting, but integrating such technology remains complex.

Multifunctional Thermal Imaging Camera Video Digital Day Night Use Gear Camping Essential Gift Day and Night Use Thermal Camera

Compact ABS construction with grade lenses ensures durability and portability, making it an essential strategic gear for professional…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

What Hardware and Software Solutions Help Robots See at Night?

To help robots see clearly in darkness, engineers develop advanced hardware and software solutions that enhance their night vision capabilities. Infrared sensors are key hardware tools, allowing robots to detect heat signatures and produce images even when visible light is scarce. These sensors can operate in complete darkness and provide critical data for navigation. On the software side, machine learning algorithms process infrared data to improve object detection and classification in low-light conditions. Machine learning helps robots distinguish obstacles from background noise, adapt to varying environments, and recognize patterns more accurately. Infrared technology is a vital component that complements software solutions to achieve effective low-light navigation. Integrating sensor data with machine learning algorithms enables robots to improve their perception and decision-making in challenging lighting conditions. Additionally, ongoing research into sensor fusion techniques aims to combine multiple data sources for even more robust night vision. Advances in hardware design also contribute to enhancing the sensitivity and durability of night vision systems in various operational environments. Moreover, calibration processes are essential to optimize sensor performance across different environments and lighting conditions. Together, infrared sensors and intelligent software enable robots to function effectively at night, ensuring reliable navigation and obstacle avoidance. This integrated approach substantially advances low-light robotics, making nighttime operations safer and more efficient.

WayPonDEV RPLIDAR C1 360 Degree 2D Lidar Sensor, 12 Meters Scanning Radius Ranging Module Kit, SLAM ROS Robot LIDAR Sensor Scanner for Obstacle Avoidance and Navigation of Robots

[High-precision Fused 2D LiDAR] RPLIDAR C1 2D lidar sensor support ranging radius up to 12m, Ranging blind spot…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

How Do Image Processing Algorithms Improve Night Vision?

Have you ever wondered how robots see clearly in darkness? Image processing algorithms play a crucial role in this. They use image enhancement techniques to brighten low-light images, making details more visible. Noise reduction is another essential step, as it eliminates grainy artifacts caused by poor lighting, resulting in clearer visuals. These algorithms analyze the raw data from sensors and improve contrast, sharpness, and overall image quality. By filtering out unwanted noise and emphasizing important features, they enable robots to interpret their environment accurately. This process often involves sensor data integration to improve the reliability of night vision. Additionally, these algorithms often utilize machine learning techniques to adapt to different lighting conditions and enhance detection accuracy. Incorporating advanced Image processing algorithms allows for better real-time analysis and decision-making in challenging low-light environments. Furthermore, ongoing advancements in computational imaging continue to push the boundaries of what robots can perceive at night. Ultimately, advanced image processing transforms fuzzy, dark images into useful visual information, allowing robots to navigate safely and effectively at night.

Enabot EBO SE FamilyBot Home Camera Robot: 1080P Movable Pet Camera Indoor, Battery-Operated, Auto-Recharge, Night Vision, 2-Way Talk, Local Storage, APP Control

Remote Control, Mobile View: Drive EBO SE mobile robot camera to find your pets or check on family….

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

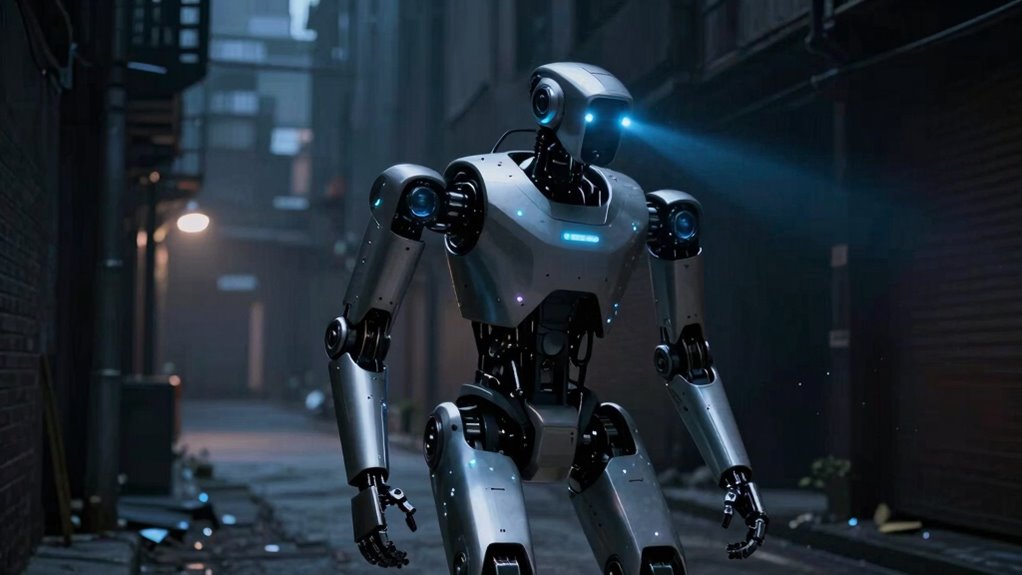

What Lighting Techniques Can Enhance Robot Night Navigation?

Ever wonder how robots see better in the dark? Using strategic lighting techniques can markedly boost their night navigation. Infrared illumination is a game-changer, allowing robots to “see” without disturbing their surroundings. LIDAR sensors complement this by mapping environments precisely, even in low light. To enhance performance, consider these techniques:

- Infrared lighting that provides unseen illumination for cameras

- LIDAR sensors to create detailed 3D maps in darkness

- Active illumination to improve image clarity without human detection

- Adaptive lighting systems that adjust brightness based on ambient conditions

- Employing sensor technology to integrate multiple data sources for more reliable navigation

- Incorporating low-light imaging techniques can further optimize visibility in challenging conditions. Additionally, understanding visual perception in robots can help refine these lighting strategies for better night operation.

Implementing sensor fusion techniques can further increase navigation accuracy in challenging lighting situations. These methods empower robots to navigate confidently at night, ensuring safety and efficiency. By leveraging infrared and LIDAR technologies, you enable your robot to operate seamlessly when light is scarce.

How Can Hardware and Software Be Integrated for Better Night Performance?

Integrating hardware and software is crucial for optimizing a robot’s night navigation capabilities. You should focus on sensor fusion, combining data from multiple sensors like infrared, LIDAR, and cameras, to create a thorough understanding of the environment. Proper hardware calibration ensures each sensor’s data aligns accurately, reducing errors caused by misalignment or inconsistent readings. This calibration is essential for reliable sensor fusion results. Additionally, calibration procedures help maintain the accuracy of sensor data over time, especially in dynamic environments. Establishing robust hardware synchronization is vital to ensure seamless data integration and prevent latency issues that could impair real-time processing. By integrating these components seamlessly, your system can adapt to low-light conditions, improving obstacle detection and path planning. Software algorithms process the fused data in real time, enhancing decision-making during navigation. This synergy between calibrated hardware and intelligent software enables your robot to perform better at night, ensuring safer, more efficient operation in challenging lighting environments. Understanding dream symbolism can also provide insights into navigating unfamiliar or confusing situations, much like nighttime robot navigation. Additionally, hardware integration plays a vital role in maintaining system stability and responsiveness during complex night operations. Proper system testing and validation further ensures reliable performance under varying low-light conditions.

How Do You Choose the Right Low-Light Navigation System for Your Robot?

Choosing the right low-light navigation system depends on ensuring sensor compatibility with your robot’s hardware. You also need to contemplate how well the system adapts to different lighting conditions. By focusing on these points, you can select a solution that performs reliably in various low-light environments. Additionally, understanding battery capacity can help determine how long your robot can operate in low-light settings without needing a recharge. Ensuring proper essential safety tips are followed during operation can further enhance your robot’s performance and longevity in challenging lighting scenarios. Incorporating cybersecurity considerations, such as security protocols, can also protect your robot from potential vulnerabilities during deployment in remote or sensitive areas. Selecting systems with proven vetted technology can give you added confidence in their reliability and performance. Furthermore, evaluating the sensor integration capabilities can aid in achieving seamless operation across diverse low-light conditions.

Sensor Compatibility Considerations

Selecting the right low-light navigation system requires carefully considering sensor compatibility with your robot’s hardware and environment. Ensuring proper sensor calibration is essential for accurate perception, especially in dark conditions. Your system must also balance power management to prevent excessive energy drain, which can compromise performance or battery life. When evaluating sensors, think about:

- Compatibility with existing hardware to avoid costly upgrades

- Sensitivity to low-light conditions for reliable detection

- Ease of calibration to maintain precision over time

- Energy efficiency to sustain long-term operation

Additionally, understanding sensor compatibility requirements can help you choose components that work seamlessly together, reducing integration issues. Considering sensor integration complexity can also influence your decision-making process, ensuring a smoother setup. Choosing sensors that integrate seamlessly will boost your robot’s confidence in dark environments, making navigation safer and more reliable. Proper sensor compatibility directly impacts performance and reliability, so don’t overlook these critical factors. For example, selecting sensors with proven cultural significance can also enhance your system’s robustness by leveraging well-established, reliable technology.

Lighting Conditions Adaptability

Adapting your low-light navigation system to varying lighting conditions is essential for reliable robot performance. You need a system that dynamically adjusts to changes, ensuring continuous robot mobility. Effective navigation algorithms can process different light levels, using techniques like adaptive exposure and enhanced image processing to maintain vision clarity. Choose sensors capable of switching between modes or combining multiple sensing methods, such as infrared and visible light cameras. This flexibility allows your robot to operate smoothly whether in near darkness or dim environments. Additionally, consider the system’s ability to recalibrate and optimize performance on the fly. By focusing on adaptable navigation algorithms and versatile sensor setups, you ensure your robot maintains mobility and accuracy in fluctuating low-light conditions.

Tips for Optimizing Robot Performance in Darkness

To improve your robot’s performance in darkness, focus on enhancing sensor integration to gather more accurate data in low-light conditions. Adaptive lighting technologies can also help improve visibility without draining power or causing interference. Combining these approaches guarantees your robot can navigate more reliably when lighting is limited.

Enhanced Sensor Integration

Integrating advanced sensors into your robot is essential for reliable navigation in low-light conditions. Proper sensor calibration ensures accurate readings, preventing errors that could lead to navigation failures. Data fusion combines inputs from multiple sensors, creating a thorough understanding of the environment even in darkness. This synergy boosts your robot’s ability to detect obstacles, identify pathways, and adapt to changing conditions. To optimize performance, consider these tips:

- Regularly calibrate sensors to maintain precision

- Use data fusion techniques for robust perception

- Select sensors with high sensitivity for low-light performance

- Continuously test and refine integration setups

Adaptive Lighting Technologies

Implementing adaptive lighting technologies can substantially enhance your robot’s performance in low-light environments. By integrating thermal imaging, your robot can detect heat signatures, allowing it to navigate effectively even in complete darkness. Infrared illumination further boosts visibility without disturbing the surroundings, providing consistent lighting tailored to the environment’s needs. These technologies adjust dynamically, activating only when necessary, which conserves power and minimizes glare. Adaptive lighting also helps in identifying obstacles, differentiating objects, and improving overall situational awareness. Combining thermal imaging with infrared illumination creates a robust system that markedly improves navigation accuracy at night. With these tools, your robot can operate reliably in challenging lighting conditions, ensuring safety, efficiency, and continuous operation when natural light is unavailable.

What Are the Future Innovations in Robotic Night Vision?

Advances in sensor technology and artificial intelligence are driving exciting innovations in robotic night vision. Future developments like quantum sensing will enable robots to detect even the faintest light signals, vastly improving low-light performance. Holographic imaging promises to create detailed 3D visuals in complete darkness, giving robots a new level of environmental awareness. You can expect robots to see through obstacles and adapt instantly to changing conditions. These innovations will:

- Reveal unprecedented clarity in darkness

- Enhance safety and precision in critical tasks

- Enable autonomous systems to operate reliably at night

- Inspire confidence in robotic navigation even where human vision struggles

Frequently Asked Questions

What Are the Limitations of Current Low-Light Camera Technologies?

Current low-light camera technologies face limitations in sensor sensitivity and image processing. You might find that sensors struggle to detect faint light, resulting in grainy or noisy images. Even advanced image processing can’t fully compensate for very low light, leading to reduced clarity and detail. As a result, your camera robot might have difficulty maneuvering or recognizing objects at night, especially in extremely dark environments where light is scarce.

How Do Environmental Factors Affect Night Navigation Accuracy?

Did you know that light pollution can reduce night navigation accuracy by up to 50%? Environmental factors like ambient light, fog, and dust interfere with sensor calibration, making it harder for camera robots to interpret surroundings accurately. You need to contemplate these elements when designing navigation systems, as they can distort images and reduce reliability, especially in urban or cluttered environments. Proper calibration and filtering are essential to overcome these challenges.

Can Low-Light Navigation Systems Operate Underwater or in Fog?

Yes, low-light navigation systems can operate underwater or in fog by using thermal imaging and acoustic sensors. Thermal imaging detects heat differences, helping your robot see in darkness or murky water, while acoustic sensors provide sound-based navigation in foggy conditions. These technologies allow your robot to navigate effectively where cameras alone would struggle, ensuring reliable operation in challenging environments. Embrace these tools to expand your robot’s capabilities beyond visible light limitations.

What Energy Considerations Are Involved in Night Vision Systems?

You need to take into account power consumption and battery management when using night vision systems. These systems often require significant energy to operate infrared LEDs or image intensifiers, which can drain batteries quickly. To optimize performance, manage your battery usage carefully by turning off unnecessary components and using energy-efficient settings. This ensures your camera robot can operate longer at night without running out of power, maintaining effective low-light navigation.

How Do Cost and Complexity Impact the Deployment of Night Navigation Solutions?

Cost barriers and complexity challenges profoundly impact your deployment of night navigation solutions. Higher costs for advanced sensors and processing systems can limit your options, making it harder to implement effective solutions widely. Additionally, complexity in integrating sensors, algorithms, and power management increases development time and requires specialized expertise. These factors can slow down deployment, increase expenses, and restrict your ability to operate reliably in low-light conditions.

Conclusion

As you explore the world of low-light navigation, remember that the future holds even more astonishing innovations. Picture your robot seamlessly gliding through darkness, its sensors piercing shadows and revealing hidden pathways. With each breakthrough, you’ll see a new domain of possibilities unfold before your eyes. Are you ready to harness these cutting-edge techniques and open the night’s secrets? The next step could transform your robotic journey—dare to discover what lies beyond the darkness.